Published - 2 months ago | 10 min read

ChatGPT vs Claude: Which AI Assistant Actually Wins for Real Work?

Everyone has an opinion on ChatGPT vs Claude. Some people swear by ChatGPT. Others quietly switched to Claude months ago and never looked back. But most comparisons out there are either too technical for everyday use or too vague to be useful.

This one is different.

We ran both default models through seven real-world tasks that reflect how people actually use AI at work: writing, reasoning, summarizing, explaining ideas, adapting tone, and thinking critically. No benchmarks. No lab tests. Just the kind of work that sits in your to-do list every single day.

The models tested were OpenAI's ChatGPT-5.2 and Anthropic's Claude Sonnet 4.6. These are the default versions each company puts forward as its best everyday experience. Here is what happened.

This one is different.

We ran both default models through seven real-world tasks that reflect how people actually use AI at work: writing, reasoning, summarizing, explaining ideas, adapting tone, and thinking critically. No benchmarks. No lab tests. Just the kind of work that sits in your to-do list every single day.

The models tested were OpenAI's ChatGPT-5.2 and Anthropic's Claude Sonnet 4.6. These are the default versions each company puts forward as its best everyday experience. Here is what happened.

A Quick Note Before We Start

The personal AI assistant market is projected to grow from around 3.4 billion dollars in 2025 to 4.84 billion dollars in 2026, on its way to nearly 20 billion dollars by 2030. That kind of growth does not happen unless people are finding real value in these tools at work. The question is no longer whether AI assistants belong in your workflow. The question is which one actually earns its place there.

Both tools tested here are genuinely useful. Neither one is broken or half-baked. The question is not which one works. The question is which one works better when the task requires judgment, nuance, or real-world framing. That is where the gap becomes visible.

Both tools tested here are genuinely useful. Neither one is broken or half-baked. The question is not which one works. The question is which one works better when the task requires judgment, nuance, or real-world framing. That is where the gap becomes visible.

Test 1: Writing Quality

Task: Write a 250-word introduction for a tech article on why AI assistants are becoming everyday productivity tools.

ChatGPT produced a well-structured overview. It broke down the key factors logically, covered the main points, and delivered something clean and readable. If you handed it to an editor, it would not come back with red marks. It got the job done.

Claude did something more interesting. It opened with a scene that made the topic feel real and immediate, then connected the technology to a deeply human idea: getting time back, reducing mental overhead, and doing more of the work that actually requires creativity. It read less like an AI output and more like a piece a senior writer would produce on a good day.

The difference is not about grammar or structure. Both were clean. The difference is that ChatGPT wrote about AI assistants as a subject. Claude wrote about them as something that affects people's lives. That shift in perspective is what makes one piece forgettable and the other one worth reading. For a company blog, a brand story, or any content meant to build trust with readers, that distinction matters more than most people realize.

Winner: Claude. The writing had a point of view, not just a structure.

ChatGPT produced a well-structured overview. It broke down the key factors logically, covered the main points, and delivered something clean and readable. If you handed it to an editor, it would not come back with red marks. It got the job done.

Claude did something more interesting. It opened with a scene that made the topic feel real and immediate, then connected the technology to a deeply human idea: getting time back, reducing mental overhead, and doing more of the work that actually requires creativity. It read less like an AI output and more like a piece a senior writer would produce on a good day.

The difference is not about grammar or structure. Both were clean. The difference is that ChatGPT wrote about AI assistants as a subject. Claude wrote about them as something that affects people's lives. That shift in perspective is what makes one piece forgettable and the other one worth reading. For a company blog, a brand story, or any content meant to build trust with readers, that distinction matters more than most people realize.

Winner: Claude. The writing had a point of view, not just a structure.

Test 2: Business Decision-Making

Task: A small business owner spends 12 hours per week answering customer emails and is considering AI automation. Should they do it?

ChatGPT made a solid case for automation. It framed the time cost clearly, laid out the benefits in an easy-to-follow way, and arrived at a reasonable recommendation. Someone reading it would come away feeling informed.

Claude approached this like a consultant who has seen projects fail. It did not jump straight to a recommendation. It started by calculating what those 12 hours are actually worth in dollar terms, then asked a harder question: what kind of emails are they answering? Routine queries about shipping and returns are a very different problem from nuanced customer complaints that require relationship management. Automating the wrong type of email can damage trust faster than it saves time.

From there, Claude mapped out a phased approach. Start with a small, low-risk category of emails. Measure the response. Adjust before expanding. It also flagged the failure modes: over-automation, generic responses that frustrate loyal customers, and the reputational cost of a customer feeling like they are talking to a bot at the wrong moment.

That kind of thinking is the difference between advice and information. ChatGPT gave information. Claude gave advice that a business owner could actually act on without walking into an obvious mistake.

Winner: Claude. When someone is weighing a real business change, they need a realistic picture that includes what can go wrong. Claude delivered that.

ChatGPT made a solid case for automation. It framed the time cost clearly, laid out the benefits in an easy-to-follow way, and arrived at a reasonable recommendation. Someone reading it would come away feeling informed.

Claude approached this like a consultant who has seen projects fail. It did not jump straight to a recommendation. It started by calculating what those 12 hours are actually worth in dollar terms, then asked a harder question: what kind of emails are they answering? Routine queries about shipping and returns are a very different problem from nuanced customer complaints that require relationship management. Automating the wrong type of email can damage trust faster than it saves time.

From there, Claude mapped out a phased approach. Start with a small, low-risk category of emails. Measure the response. Adjust before expanding. It also flagged the failure modes: over-automation, generic responses that frustrate loyal customers, and the reputational cost of a customer feeling like they are talking to a bot at the wrong moment.

That kind of thinking is the difference between advice and information. ChatGPT gave information. Claude gave advice that a business owner could actually act on without walking into an obvious mistake.

Winner: Claude. When someone is weighing a real business change, they need a realistic picture that includes what can go wrong. Claude delivered that.

Test 3: Explaining Complex Ideas Simply

Task: Explain how large language models work to a 12-year-old.

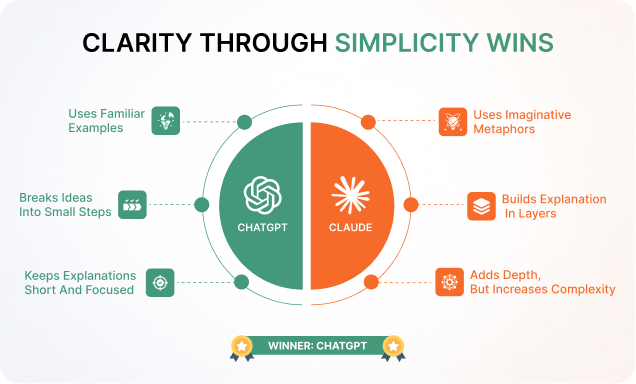

This is the one test where ChatGPT came out ahead, and it is worth understanding why.

ChatGPT anchored the explanation in something every kid knows: the autocomplete on a phone keyboard. From that starting point, it walked through the logic in short steps. The model reads a lot of text. It learns which words tend to follow other words. When you ask it a question, it predicts the most likely useful response. Clean, linear, age-appropriate.

Claude used the metaphor of a well-read friend, which is creative and memorable for an adult audience. But the explanation then built upward in layers of detail that may have outpaced a 12-year-old's patience. The metaphor was good. The execution added complexity where the goal was simplicity.

Explaining something simply is genuinely hard. It requires knowing which details to cut, not just which ones to include. A shorter, cleaner answer that a child follows completely is more valuable than a richer answer that loses them halfway through. ChatGPT showed better discipline here.

Winner: ChatGPT. Simplicity for a young audience requires restraint, and ChatGPT exercised it better.

This is the one test where ChatGPT came out ahead, and it is worth understanding why.

ChatGPT anchored the explanation in something every kid knows: the autocomplete on a phone keyboard. From that starting point, it walked through the logic in short steps. The model reads a lot of text. It learns which words tend to follow other words. When you ask it a question, it predicts the most likely useful response. Clean, linear, age-appropriate.

Claude used the metaphor of a well-read friend, which is creative and memorable for an adult audience. But the explanation then built upward in layers of detail that may have outpaced a 12-year-old's patience. The metaphor was good. The execution added complexity where the goal was simplicity.

Explaining something simply is genuinely hard. It requires knowing which details to cut, not just which ones to include. A shorter, cleaner answer that a child follows completely is more valuable than a richer answer that loses them halfway through. ChatGPT showed better discipline here.

Winner: ChatGPT. Simplicity for a young audience requires restraint, and ChatGPT exercised it better.

Test 4: Step-by-Step Financial Reasoning

Task: A freelancer earns $4,000 per month with $2,500 in fixed expenses. They want a $6,000 emergency fund. Build a savings plan and show the reasoning.

ChatGPT was thorough. It flagged an important ambiguity right away: is the $4,000 pre-tax or post-tax income? That is a genuinely useful clarification that most people answering this question would skip. It then ran the numbers for both scenarios and laid out a clean monthly savings plan.

Claude went a step further and built the plan around the uncomfortable reality of freelance finances. Freelancers in most countries are responsible for their own tax payments, which often run between 25 and 35 percent of income depending on location and bracket. That means the $1,500 that looks like disposable income after fixed expenses is not really $1,500. After setting aside a realistic tax reserve, it might be closer to $800 or less.

Claude built the emergency fund plan on that lower, honest number. It also stress-tested the plan against irregular income months, which is a core feature of freelance work that salaried financial advice ignores entirely. What happens in a slow month when income drops to $2,500? Does the savings plan collapse, or does it flex? Claude answered that question without being asked.

The ChatGPT answer was accurate. Claude's answer was realistic. For someone making actual financial decisions, realistic is more useful than accurate on paper.

Winner: Claude. As one of the best AI assistant 2026 contenders, Claude showed that honest financial planning requires surfacing the parts people would rather not think about.

ChatGPT was thorough. It flagged an important ambiguity right away: is the $4,000 pre-tax or post-tax income? That is a genuinely useful clarification that most people answering this question would skip. It then ran the numbers for both scenarios and laid out a clean monthly savings plan.

Claude went a step further and built the plan around the uncomfortable reality of freelance finances. Freelancers in most countries are responsible for their own tax payments, which often run between 25 and 35 percent of income depending on location and bracket. That means the $1,500 that looks like disposable income after fixed expenses is not really $1,500. After setting aside a realistic tax reserve, it might be closer to $800 or less.

Claude built the emergency fund plan on that lower, honest number. It also stress-tested the plan against irregular income months, which is a core feature of freelance work that salaried financial advice ignores entirely. What happens in a slow month when income drops to $2,500? Does the savings plan collapse, or does it flex? Claude answered that question without being asked.

The ChatGPT answer was accurate. Claude's answer was realistic. For someone making actual financial decisions, realistic is more useful than accurate on paper.

Winner: Claude. As one of the best AI assistant 2026 contenders, Claude showed that honest financial planning requires surfacing the parts people would rather not think about.

Test 5: Tone and Style Adaptation

Task: Rewrite a workplace message in three tones: professional, friendly, and persuasive. Original message: the team needs to adopt new software next week or the company risks falling behind competitors.

ChatGPT produced three clean variations. Each one hit the right tone. The professional version was formal and direct. The friendly version softened the language. The persuasive version added urgency. All three were grammatically correct and tonally appropriate.

Claude treated the task as a communication problem rather than a writing exercise. The professional version was written the way a VP of Operations would actually write it, including a clear timeline, an implied consequence, and the kind of confident language that signals seriousness without aggression. The friendly version read like a message from a manager who genuinely wants the team to succeed, not just comply. The persuasive version included a specific hook, framed the change as an opportunity rather than a threat, and ended with a call to action.

The distinction here is between technically correct and professionally usable. ChatGPT gave you three correct versions. Claude gave you three messages you could paste into an email and send without editing. For anyone who uses AI productivity tools to speed up communication at work, that gap in usability is significant.

Winner: Claude. The goal of a rewriting task is to produce something ready to use. Claude understood that.

ChatGPT produced three clean variations. Each one hit the right tone. The professional version was formal and direct. The friendly version softened the language. The persuasive version added urgency. All three were grammatically correct and tonally appropriate.

Claude treated the task as a communication problem rather than a writing exercise. The professional version was written the way a VP of Operations would actually write it, including a clear timeline, an implied consequence, and the kind of confident language that signals seriousness without aggression. The friendly version read like a message from a manager who genuinely wants the team to succeed, not just comply. The persuasive version included a specific hook, framed the change as an opportunity rather than a threat, and ended with a call to action.

The distinction here is between technically correct and professionally usable. ChatGPT gave you three correct versions. Claude gave you three messages you could paste into an email and send without editing. For anyone who uses AI productivity tools to speed up communication at work, that gap in usability is significant.

Winner: Claude. The goal of a rewriting task is to produce something ready to use. Claude understood that.

Test 6: Executive Summarization

Task: Summarize a passage about hybrid work schedules, async communication, and four-day workweeks in five bullet points for a busy executive.

ChatGPT delivered a clean, scannable summary. It covered the key points accurately, kept the language tight, and formatted it in a way that is easy to skim. That is exactly what the task described, and it delivered it.

Claude reframed the task from the executive's perspective. An executive reading a summary does not just want to know what is happening. They want to know what it means for their organization, what decisions it might require, and what to watch for. Claude wrote each bullet as a trend with business implications rather than a description of a fact.

For example, instead of saying "companies are experimenting with four-day workweeks," Claude framed it as a signal about what talent now expects, and what companies that ignore it risk. That is the difference between informing an executive and briefing one. Briefing requires understanding what the person will do with the information, not just what information to include.

Winner: Claude. Among AI productivity tools, writing for a specific reader rather than a generic one is a rare and valuable capability.

ChatGPT delivered a clean, scannable summary. It covered the key points accurately, kept the language tight, and formatted it in a way that is easy to skim. That is exactly what the task described, and it delivered it.

Claude reframed the task from the executive's perspective. An executive reading a summary does not just want to know what is happening. They want to know what it means for their organization, what decisions it might require, and what to watch for. Claude wrote each bullet as a trend with business implications rather than a description of a fact.

For example, instead of saying "companies are experimenting with four-day workweeks," Claude framed it as a signal about what talent now expects, and what companies that ignore it risk. That is the difference between informing an executive and briefing one. Briefing requires understanding what the person will do with the information, not just what information to include.

Winner: Claude. Among AI productivity tools, writing for a specific reader rather than a generic one is a rare and valuable capability.

Test 7: Critical Thinking and Bias Awareness

Task: Social media algorithms amplify extreme content. Explain why and propose realistic ways platforms could reduce polarization without hurting engagement.

ChatGPT gave a well-organized and genuinely useful response. It explained the mechanics of algorithmic amplification clearly, covered the psychology of outrage-driven engagement, and proposed practical solutions including tweaking recommendation weights, adding friction before resharing, and promoting authoritative sources. Solid analysis.

Claude did something that separated it from a good explainer and made it useful for someone who actually has to work in this space. It named the central problem that most discussions avoid: reducing polarization and growing engagement are not just difficult to balance. They are structurally opposed. Platforms are built on advertising revenue. Advertising revenue scales with time on the platform. Extreme and emotionally provocative content holds attention longer than moderate content. Any intervention that meaningfully reduces polarization will, by definition, reduce the engagement metrics that drive revenue.

Claude then built its proposed solutions from inside that constraint rather than around it. Instead of pretending platforms could simply choose to be less toxic, it asked what changes a platform could make that reduce harm while preserving enough engagement to remain commercially viable. That framing produced more realistic proposals than an analysis that ignores the economic incentives at play.

Naming the honest constraint is not pessimism. It is precision. And in any field where you are advising organizations, writing strategy documents, or explaining complex systems to stakeholders, that precision is exactly what separates useful analysis from noise.

Winner: Claude. Stronger critical thinking, more realistic framing, and a clearer understanding of why the problem is hard to solve.

ChatGPT gave a well-organized and genuinely useful response. It explained the mechanics of algorithmic amplification clearly, covered the psychology of outrage-driven engagement, and proposed practical solutions including tweaking recommendation weights, adding friction before resharing, and promoting authoritative sources. Solid analysis.

Claude did something that separated it from a good explainer and made it useful for someone who actually has to work in this space. It named the central problem that most discussions avoid: reducing polarization and growing engagement are not just difficult to balance. They are structurally opposed. Platforms are built on advertising revenue. Advertising revenue scales with time on the platform. Extreme and emotionally provocative content holds attention longer than moderate content. Any intervention that meaningfully reduces polarization will, by definition, reduce the engagement metrics that drive revenue.

Claude then built its proposed solutions from inside that constraint rather than around it. Instead of pretending platforms could simply choose to be less toxic, it asked what changes a platform could make that reduce harm while preserving enough engagement to remain commercially viable. That framing produced more realistic proposals than an analysis that ignores the economic incentives at play.

Naming the honest constraint is not pessimism. It is precision. And in any field where you are advising organizations, writing strategy documents, or explaining complex systems to stakeholders, that precision is exactly what separates useful analysis from noise.

Winner: Claude. Stronger critical thinking, more realistic framing, and a clearer understanding of why the problem is hard to solve.

Overall Score: ChatGPT vs Claude

Claude won six of the seven tests. ChatGPT won one.

That does not mean ChatGPT is a bad tool. ChatGPT still dominates consumer mindshare for good reason: OpenAI reports more than 2.5 billion prompts sent to ChatGPT every day. At that scale, it is clearly doing something right. It is fast, clear, and excellent at making complex things accessible. For tasks that require simplicity and structure, it holds up well. In any ChatGPT vs Claude comparison focused purely on accessibility and ease of explanation, it is a serious competitor.

But when the task requires judgment, Claude performs at a different level. It identifies risks that other answers miss. It writes for real audiences rather than generic ones. It names trade-offs instead of pretending they do not exist. Across this full ChatGPT vs Claude comparison, that pattern held across almost every category.

That does not mean ChatGPT is a bad tool. ChatGPT still dominates consumer mindshare for good reason: OpenAI reports more than 2.5 billion prompts sent to ChatGPT every day. At that scale, it is clearly doing something right. It is fast, clear, and excellent at making complex things accessible. For tasks that require simplicity and structure, it holds up well. In any ChatGPT vs Claude comparison focused purely on accessibility and ease of explanation, it is a serious competitor.

But when the task requires judgment, Claude performs at a different level. It identifies risks that other answers miss. It writes for real audiences rather than generic ones. It names trade-offs instead of pretending they do not exist. Across this full ChatGPT vs Claude comparison, that pattern held across almost every category.

What Each Tool Is Actually Good For

ChatGPT works best when you need something clear, fast, and easy to understand. Teaching a concept to someone new to a topic, building a simple explanation, or producing a clean structured draft are areas where it performs reliably. It is a strong tool for communication that needs to reach a broad or non-specialist audience.

Claude works best when the task has stakes. Business decisions, strategic writing, financial planning, executive communication, critical analysis, and any situation where judgment matters more than speed. It treats prompts as problems to solve rather than questions to answer. It looks for what is missing from the obvious response, not just what belongs in it.

For teams building workflows around AI productivity tools, the distinction matters practically. A tool that produces clean output saves time. A tool that surfaces the uncomfortable and overlooked parts of a problem creates real value.

Claude works best when the task has stakes. Business decisions, strategic writing, financial planning, executive communication, critical analysis, and any situation where judgment matters more than speed. It treats prompts as problems to solve rather than questions to answer. It looks for what is missing from the obvious response, not just what belongs in it.

For teams building workflows around AI productivity tools, the distinction matters practically. A tool that produces clean output saves time. A tool that surfaces the uncomfortable and overlooked parts of a problem creates real value.

Conclusion

The ChatGPT vs Claude debate often gets framed as a matter of personal preference. After running through these tests side by side, it is something more specific than that.

Claude Sonnet 4.6 is better at thinking through problems. ChatGPT-5.2 is better at presenting information clearly. Both are strong. One goes deeper.

If your work involves making real decisions, communicating with senior stakeholders, advising others, or producing content that needs to hold up to scrutiny, Claude is the better daily tool. If you frequently need to simplify or explain things to broad or younger audiences, ChatGPT earns its place in the mix.

The best AI assistant 2026 is not a single answer for every use case. But for most professional work, across most of the tasks that actually fill a workday, Claude leads.

Claude Sonnet 4.6 is better at thinking through problems. ChatGPT-5.2 is better at presenting information clearly. Both are strong. One goes deeper.

If your work involves making real decisions, communicating with senior stakeholders, advising others, or producing content that needs to hold up to scrutiny, Claude is the better daily tool. If you frequently need to simplify or explain things to broad or younger audiences, ChatGPT earns its place in the mix.

The best AI assistant 2026 is not a single answer for every use case. But for most professional work, across most of the tasks that actually fill a workday, Claude leads.

FAQ:

1. What are the top marketing trends for 2026?

The top marketing trends 2026 include Agentic AI, Generative Engine Optimization (GEO), and Hyper-Personalization.

2. Why are generalist marketers disappearing?

They are disappearing because AI can now handle general execution tasks faster and cheaper, leaving no room for "average" work.

3. What is a T-Shaped Marketer?

A T-shaped marketer has broad industry knowledge and deep, expert-level proficiency in one specific area, which is essential for the future of digital marketing.

4. Is SEO dead in 2026?

Traditional SEO is declining; it is being replaced by GEO, which requires specialist marketing skills to optimize for AI answers using Information Gain.

5. What skills should I learn in 2026?

Focus on AI Governance, Video Commerce, and Data Architecture to align with marketing trends 2026.

6. Will AI take my marketing job?

AI will replace generalists focused on execution; it will empower strategists who understand the future of digital marketing.

Written by / Author

Manasi Maheshwari

Found this useful? Share With

Top blogs

Most Read Blogs

Wits Innovation Lab is where creativity and innovation flourish. We provide the tools you need to come up with innovative solutions for today's businesses, big or small.

© 2026 Wits Innovation Lab, All rights reserved

Crafted in-house by WIL’s talented minds